Picture Credit: httpss://www.livemint.com/

We need some very human skills to work in tandem with our very high-tech machines

By Nish Bhutani, Founder & CEO – Indiginus

As published in the Mint on October 17, 2018

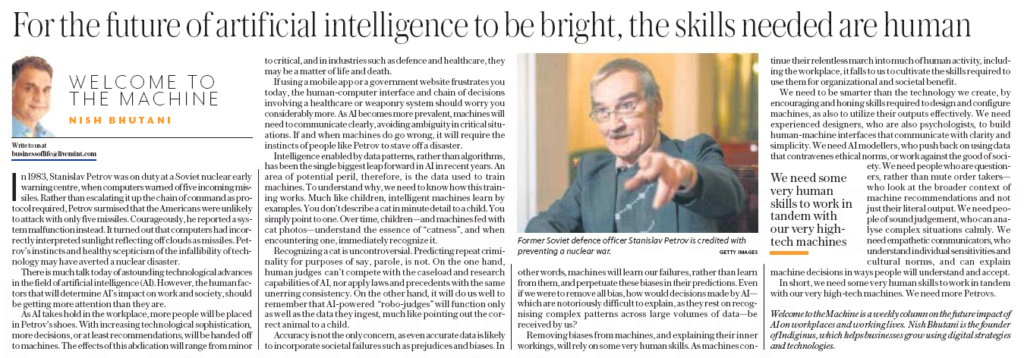

In 1983, Stanislav Petrov was on duty at a Soviet nuclear early warning centre, when computers warned of five incoming missiles. Rather than escalating it up the chain of command as protocol required, Petrov surmised that the Americans were unlikely to attack with only five missiles. Courageously, he reported a system malfunction instead. It turned out that computers had incorrectly interpreted sunlight reflecting off clouds as missiles. Petrov’s instincts and healthy scepticism of the infallibility of technology may have averted a nuclear disaster.

There is much talk today of astounding technological advances in the field of artificial intelligence (AI). However, the human factors that will determine AI’s impact on work and society, should be getting more attention than they are.

As AI takes hold in the workplace, more people will be placed in Petrov’s shoes. With increasing technological sophistication, more decisions, or at least recommendations, will be handed off to machines. The effects of this abdication will range from minor to critical, and in industries such as defence and healthcare, they may be a matter of life and death.

If using a mobile app or a government website frustrates you today, the human-computer interface and chain of decisions involving a healthcare or weaponry system should worry you considerably more. As AI becomes more prevalent, machines will need to communicate clearly, avoiding ambiguity in critical situations. If and when machines do go wrong, it will require the instincts of people like Petrov to stave off a disaster.

Intelligence enabled by data patterns, rather than algorithms, has been the single biggest leap forward in AI in recent years. An area of potential peril, therefore, is the data used to train machines. To understand why, we need to know how this training works. Much like children, intelligent machines learn by examples. You don’t describe a cat in minute detail to a child. You simply point to one. Over time, children—and machines fed with cat photos—understand the essence of “catness”, and when encountering one, immediately recognize it.

Recognizing a cat is uncontroversial. Predicting repeat criminality for purposes of say, parole, is not. On the one hand, human judges can’t compete with the caseload and research capabilities of AI, nor apply laws and precedents with the same unerring consistency. On the other hand, it will do us well to remember that AI-powered “robo-judges” will function only as well as the data they ingest, much like pointing out the correct animal to a child.

Accuracy is not the only concern, as even accurate data is likely to incorporate societal failures such as prejudices and biases. In other words, machines will learn our failures, rather than learn from them, and perpetuate these biases in their predictions. Even if we were to remove all bias, how would decisions made by AI—which are notoriously difficult to explain, as they rest on recognising complex patterns across large volumes of data—be received by us?

Removing biases from machines, and explaining their inner workings, will rely on some very human skills. As machines continue their relentless march into much of human activity, including the workplace, it falls to us to cultivate the skills required to use them for organizational and societal benefit.

We need to be smarter than the technology we create, by encouraging and honing skills required to design and configure machines, as also to utilize their outputs effectively. We need experienced designers, who are also psychologists, to build human-machine interfaces that communicate with clarity and simplicity. We need AI modellers, who push back on using data that contravenes ethical norms, or work against the good of society. We need people who are questioners, rather than mute order takers—who look at the broader context of machine recommendations and not just their literal output. We need people of sound judgement, who can analyse complex situations calmly. We need empathetic communicators, who understand individual sensitivities and cultural norms, and can explain machine decisions in ways people will understand and accept.

In short, we need some very human skills to work in tandem with our very high-tech machines. We need more Petrovs.

Welcome to the Machine is a weekly column on the future impact of AI on workplaces and working lives. Nish Bhutani is the founder of Indiginus, which helps businesses grow using digital strategies and technologies.